Datasets

A Dataset corresponds to your original text/image/tabular data.

Cleanlab Studio can analyze and train/deploy models on diverse types of datasets. This page outlines how to format your data and the available options.

Modalities

Cleanlab Studio supports datasets of the following modalities:

- Tabular (structured data stored in tables with numeric/categorical/string columns, e.g. financial reports, sensor measurements)

- Text (e.g. customer service requests, reports, LLM outputs)

- Image (e.g. product images, photographs, satellite imagery)

Text/Tabular

Text/tabular datasets are structured datasets composed of rows and columns, with each row representing an individual data point. Text datasets contain a single text sample for each data point, while tabular datasets can have many predictive columns present for each data point. Text/tabular datasets can be uploaded in multiple formats, including CSV, JSON, Excel, and DataFrame.

For more information on how to use text/tabular datasets, see our tabular and text quickstart tutorials.

Image

Image datasets are datasets composed of rows of images with attached metadata (including but not limited to labels). Image datasets can be uploaded in multiple formats. If your image files are stored locally, you can use one of the ZIP upload formats. If your image files are hosted (on an Internet-accessible web server or storage platform, like S3 or Google Drive), you can upload your dataset by including links to your external images via an external image column in a CSV, JSON, Excel, or DataFrame upload.

For more information on how to use image datasets, see our image quickstart tutorial.

Machine Learning Task

Although you do not need to select a machine learning task type when uploading a dataset to Cleanlab Studio, different tasks require different formatting. To make sure you format your dataset correctly, we recommend that you first investigate teh different task types and format your dataset to match your desired task ahead of time. For more information on ML task types, see our projects guide.

Multi-class

In a multi-class classification task, the objective is to categorize data points into one of K distinct classes, e.g. classifying animals as one of “cat”, “dog”, “bird”.

To format your dataset for multi-class classification, ensure your dataset includes a column containing the class each row belongs to and follow the appropriate structure for your modality and file format.

Unlabeled data

To include unlabeled rows (i.e. rows without annotations) in your multi-class dataset, simply leave their values in the label column empty/blank.

Multi-label

In a multi-label classification task, each data point can belong to multiple (or no) classes, e.g. assigning multiple categories to news articles.

For multi-label classification, your dataset’s label column should be formatted as a comma-separated string of classes, e.g. “politics,economics” (there should be no whitespace between labels). Note: for image datasets, you must use the Metadata or External Media upload formats.

Unlabeled data vs. Empty labels

For multi-label datasets, there’s an important distinction between unlabeled rows and rows with empty labels. Unlabeled rows are data points where the label(s) are unknown. You can use Cleanlab Studio to determine whether these rows belong to any of your dataset’s classes. Rows with empty labels are data points that have no labels – they have been annotated to indicate that they don’t belong to any of your dataset’s classes.

When uploading a CSV or Excel dataset, any empty values in your label column will be interpreted as unlabeled rows rather than rows with empty labels. To distinguish between the two in your dataset, you must use JSON file format or DataFrame format.

You can represent these two types of data points in the JSON file format using the following values:

- empty labels:

""(empty string) - unlabeled:

null

You can represent these two types of data points in a DataFrame upload using the following values:

- empty labels:

""(empty string) - unlabeled:

None,pd.NA

Note: If you only have empty labels (but no unlabeled data) you still need to provide the file in JSON format and set the labels to "". Empty string labels in CSV or Excel format will be interpreted as unlabeled.

Regression

In a regression task, the objective is to label each data point with a continuous numerical value, e.g. price, income, or age, rather than a discrete category.

To format your dataset for regression, ensure your dataset includes a label column containing the continuous numeric value you’d like to predict and follow the appropriate structure for your modality and file format. Note: you’ll need to set the column type for your label column to float before creating a regression project. See the Schema Updates section for information on how to do this.

Unlabeled data

To include unlabeled rows (i.e. rows without annotations) in your regression dataset, simply leave their values in the label column empty/blank.

See our regression tutorial for an example of using Cleanlab Studio for a regression task.

Unsupervised

For an unsupervised task, there is no target variable to predict for data points. This task type might be appropriate for your data if there is no clear “label column”. Follow the appropriate structure for your modality and file format.

See our unsupervised tutorial for an example of using Cleanlab Studio for an unsupervised task.

File Formats

Cleanlab Studio natively supports CSV, JSON, Excel, and ZIP file formats for uploading datasets. In addition, the Python API supports uploading data through Pandas, PySpark, and Snowpark DataFrames. For other common formats, see our tutorials for converting common text and image dataset formats into one of our natively supported formats.

CSV

CSV is a standard file format for storing text/tabular data, where each row represents a data record and consists of one or more fields (columns) separated by a delimiter.

Make sure your CSV dataset follows these formatting requirements:

- Each row is represented by a single line of text with fields separated by a

,delimiter. - String values containing the delimiter character (

,) or special characters (e.g. newline characters) should be enclosed within double quotes (” “). - The first row should be a header containing the names of all columns in the dataset.

- Empty fields are represented by consecutive delimiter characters with no value in between or empty double quotes (

""). - Each row should contain the same number of columns, with missing values represented as empty fields.

- Each row should be separated by a newline.

Unlabeled data.

You can indicate if a data point is not yet annotated/labeled by leaving its value in the label column empty. Note: for multi-label tasks, if you need data points with empty labels, you must use the JSON file format.

Example CSV text dataset

review_id,review,label

f3ac,The sales rep was fantastic!,positive

d7c4,He was a bit wishy-washy.,negative

439a,They kept using the word "obviously," which was off-putting.,positive

a53f,,negative

JSON

JSON is a standard file format for storing and exchanging structured data organized using key-value pairs. In a JSON dataset, each row is represented by an object where keys correspond to column names.

Your JSON dataset should follow these formatting requirements:

- Each row is represented as a JSON object consisting of key-value pairs (separated by colons

:) enclosed in curly braces{}. Each key is a string (enclosed in double quotes" ") that uniquely identifies the value associated with it. - The rows of your dataset are enclosed in a JSON array (square brackets

[]) and separated by commas,. - Valid types for values in your dataset include strings, numbers, booleans, and null values.

- Your dataset cannot contain nested array or object values. Ensure these are flattened before uploading to Cleanlab Studio.

- Every key is present in every row of your dataset.

Unlabeled data

If you’re formatting a dataset for a multi-class or regression task, you can indicate if a data point is not yet annotated/labeled using a "" or null value.

For multi-label tasks, you must use null values to indicate data points that are not yet annotated/labeled. This allows us to distinguish between data points where no class applies (indicated by "") and data points that are not yet annotated (see here for more information on the difference between these).

Example JSON multi-label text dataset

In this example dataset each data point is a text review. The first data point f3ac is a data point with an empty label (has been annotated as belonging to none of the classes), while the last review a53f is an unlabeled data point (that has not yet been annotated). Note the difference between their label values to distinguish these cases.

[

{

"review_id": "f3ac",

"review": "The message was sent yesterday.",

"label": ""

},

{

"review_id": "d7c4",

"review": "He was a bit rude to the staff.",

"label": "negative,rude,mean"

},

{

"review_id": "439a",

"review": "They provided a wonderful experience that made us very happy.",

"label": "positive,happy,joy"

},

{

"review_id": "a53f",

"review": "Please let her know I appreciated the hospitality.",

"label": null

}

]

Excel

Excel is a popular file format for spreadsheets. Cleanlab Studio supports both .xls and .xlsx files.

Your Excel dataset should follow these formatting requirements:

- Only the first sheet of your spreadsheet will be imported as your dataset.

- The first row of your sheet should contain names for all of the columns.

Unlabeled data

You can indicate if a data point is not yet annotated/labeled by leaving its value in the label column empty. Note: for multi-label tasks, if you need data points with empty labels, you must use the JSON file format.

Example Excel text dataset

| _ | review_id | review | label |

|---|---|---|---|

| 0 | f3ac | The sales rep was fantastic! | positive |

| 1 | d7c4 | He was a bit wishy-washy. | negative |

| 2 | 439a | They kept using the word “obviously,” which wa… | positive |

| 3 | a53f | negative |

DataFrame

Cleanlab Studio’s Python API supports a number of DataFrame formats, including Pandas, PySpark DataFrames, and Snowpark DataFrames. You can upload directly from a DataFrame in a Python script or Jupyter notebook.

See our Databricks integration and Snowflake integration for more details on uploading from PySpark and Snowpark DataFrames.

Unlabeled data

If you’re formatting a dataset for a multi-class or regression task, you can indicate if a data point is not yet annotated/labeled using a "", None, or pd.NA value (or the equivalent null value for PySpark/Snowpark).

For multi-label tasks, you must use None or pd.NA values to indicate data points that are not yet annotated/labeled. This allows us to distinguish between data points where no class applies (indicated by "") and data points that are not yet annotated (see here for more information on the difference between these).

Example DataFrame text dataset

| _ | review_id | review | label |

|---|---|---|---|

| 0 | f3ac | The sales rep was fantastic! | positive |

| 1 | d7c4 | He was a bit wishy-washy. | negative |

| 2 | 439a | They kept using the word “obviously,” which wa… | positive |

| 3 | a53f | negative |

ZIP

ZIP files are commonly used for storing and transferring multiple files/directories as a single compressed file. You can upload a dataset with image files to Cleanlab Studio using a ZIP file. Supported structures for organizing your ZIP file are outlined below.

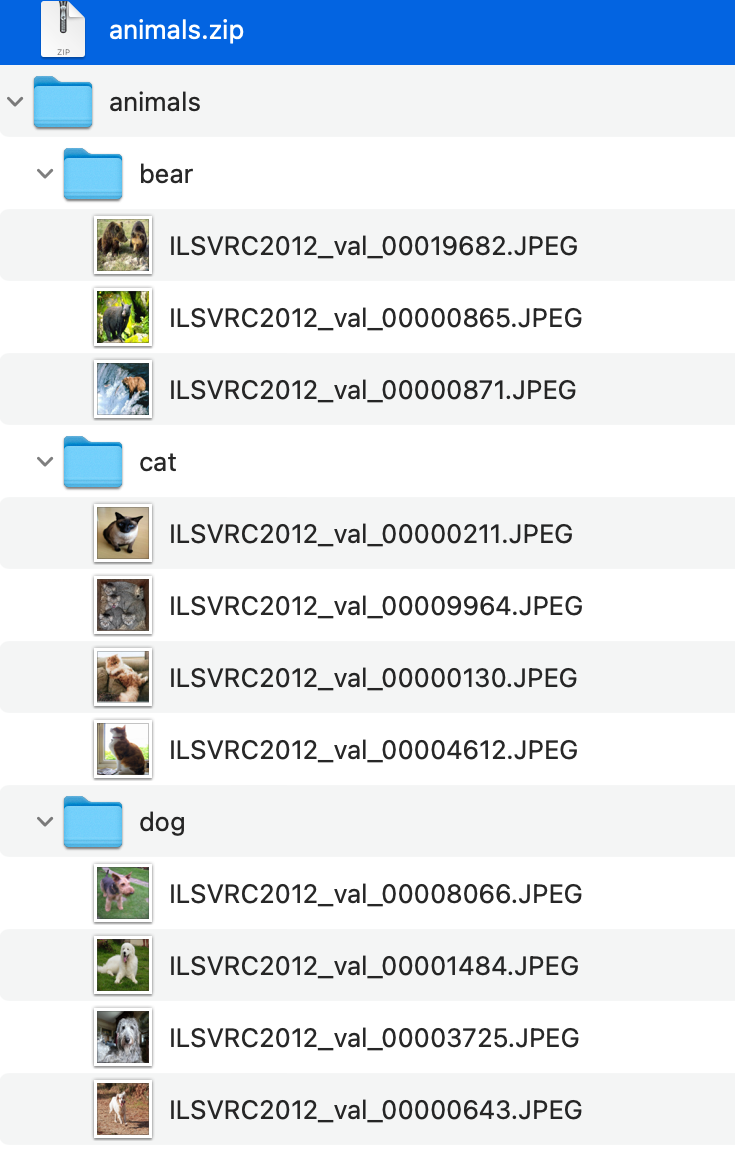

Simple ZIP

Note: Simple ZIP format is only supported for multi-class datasets.

To format your dataset as a simple ZIP upload:

- Create a top-level folder for your dataset.

- Inside the top-level folder, create a folder for each class in your dataset. The name of each folder will be used as the class label for images within the folder.

- Inside each class folder, add image files belonging to the class.

- ZIP the top-level folder.

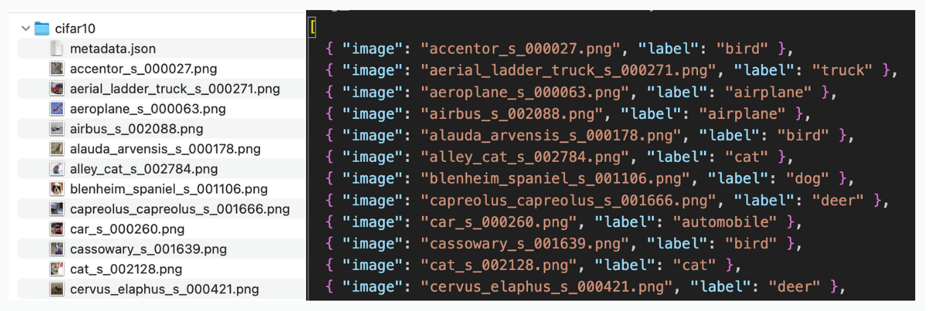

Metadata ZIP

If you are uploading an image dataset for a multi-label, regression, or unsupervised task or would like to include metadata associated with images in your dataset, you can include either a metadata.csv or metadata.json file in your ZIP dataset.

To format your dataset as a ZIP with metadata upload:

- Create a top-level folder for your dataset.

- Add image files for your dataset to the folder. These files can optionally be organized within subfolders.

- Add a

metadata.csvormetadata.jsonfile inside your top-level folder. - ZIP the top-level folder.

Your metadata file (metadata.csv or metadata.json) should follow these formatting requirements:

- The file should contain a column named

image. The values in this column should correspond to the relative paths to images from yourmetadata.csvormetadata.jsonfile. - If uploading a dataset for a multi-class, multi-label, or regression task, the file should include a column for the labels corresponding to each image.

- The file can optionally include other columns with additional metadata that you would like to view or filter by in Cleanlab Studio (these columns will not be used for project training).

- See the CSV and JSON file format sections for more details specific to each file type.

Schemas

Schemas define the type of each column in your dataset. This allows Cleanlab Studio to understand the structure of your data and provide the best possible analysis and model training experience.

Cleanlab Studio supports the following column types:

| Column Type | Sub-types | Override Name |

|---|---|---|

| Untyped | - | untyped |

| Integer | - | integer |

| Float | - | float |

| Boolean | - | boolean |

| String | - | string |

| Image | - | - |

| External Image | - | image_external |

| Date | Seconds (epoch) Milliseconds (epoch) Microseconds (epoch) Nanoseconds (epoch) Parse | date_epoch_sdate_epoch_msdate_epoch_usdate_epoch_nsdate_parse |

| Datetime | Seconds (epoch) Milliseconds (epoch) Microseconds (epoch) Nanoseconds (epoch) Parse | datetime_epoch_sdatetime_epoch_msdatetime_epoch_usdatetime_epoch_nsdatetime_parse |

| Time | Seconds (epoch) Milliseconds (epoch) Microseconds (epoch) Nanoseconds (epoch) Parse | time_epoch_stime_epoch_mstime_epoch_ustime_epoch_nstime_parse |

By default, Cleanlab Studio sets all columns to Untyped, which interprets all columns as text.

This can be overriden by:

- Updating the schema in the UI after uploading your dataset

- Providing a schema override when uploading your dataset (via Python Client)

See Schema Updates section for more information.

Column Types

Untyped

This is the default type for columns in Cleanlab Studio. It is used when the type of a column is not specified.

Columns with type Untyped are interpreted as text.

Integer

Columns with type Integer are interpreted as 64-bit integer numbers.

Float

Columns with type Float are interpreted as 64-bit floating point numbers.

Boolean

Columns with type Boolean are interpreted as boolean values.

The following values are interpreted as True: true, yes, on, 1.

The following values are interpreted as False: false, no, off, 0.

Unique prefixes of these strings are also accepted, for example t or n. Leading or trailing whitespace is ignored, and case does not matter.

String

Columns with type String are interpreted as text.

Image

Columns with type Image are interpreted as image references. This type is used for ZIP image datasets, and cannot be set manually. The column type for a column with type Image also cannot be updated.

External Image

Columns with type External Image are interpreted as URLs to external images. This type is used for external media image datasets.

Example external media image dataset

| _ | img | label |

|---|---|---|

| 0 | https://s.cleanlab.ai/DCA_Competition_2023_Dat… | c |

| 1 | https://s.cleanlab.ai/DCA_Competition_2023_Dat… | h |

| 2 | https://s.cleanlab.ai/DCA_Competition_2023_Dat… | y |

| 3 | https://s.cleanlab.ai/DCA_Competition_2023_Dat… | p |

| 4 | https://s.cleanlab.ai/DCA_Competition_2023_Dat… | j |

Date

Columns with type Date are interpreted as dates. The column sub-types specify how the data is converted into a date.

For example, date_epoch_s specifies that the column contains Unix timestamps in seconds.

date_parse specifies that the column contains dates in a custom format, which is parsed from the following formats:

| Format | Example | Description |

|---|---|---|

%Y-%m-%d | 1999-02-15 | ISO 8601 format |

%Y/%m/%d | 1999/02/15 | |

%B %d, %Y | February 15, 1999 | |

%Y-%b-%d | 1999-Feb-15 | |

%d-%m-%Y | 15-02-1999 | |

%d/%m/%Y | 15/02/1999 | |

%d-%b-%Y | 15-Feb-1999 | |

%b-%d-%Y | Feb-15-1999 | |

%d-%b-%y | 15-Feb-99 | |

%b-%d-%y | Feb-15-99 | |

%Y%m%d | 19990215 | |

%y%m%d | 990215 | |

%Y.%j | 1999.46 | year and day of year |

%m-%d-%Y | 02-15-1999 | |

%m/%d/%Y | 02/15/1999 |

For more information on format codes, see the Python documentation.

Time

Columns with type Time are interpreted as times. The column sub-types specify how the data is converted to a time.

For example, time_epoch_us specifies that the column contains Unix timestamps in microseconds.

time_parse specifies that the column contains times in a custom format, which is parsed from the following formats:

| Format | Example | Description |

|---|---|---|

%H:%M:%S.%f | 04:05:06.789 | ISO 8601 format |

%H:%M:%S | 04:05:06 | ISO 8601 format |

%H:%M | 04:05 | ISO 8601 format |

%H%M%S | 040506 | |

%H%M | 0405 | |

%H%M%S.%f | 040506.789 | |

%I:%M %p | 04:05 AM | |

%I:%M:%S %p | 04:05:06 PM | |

%H:%M:%S.%f%z | 04:05:06.789-08:00 | ISO 8601 format with UTC offset for PST timezone |

%H:%M:%S%z | 04:05:06-08:00 | |

%H:%M%z | 04:05-08:00 | |

%H%M%S.%f%z | 040506.789-08:00 | |

%H:%M:%S.%f %Z | 04:05:06.789 PST | Note: most common timezone abbreviations are supported, but not all. See full list in section below. |

%H:%M:%S %Z | 04:05:06 PST | |

%H:%M %Z | 04:05 PST | |

%H%M %Z | 0405 PST | |

%H%M%S.%f | 040506.789 PST | |

%I:%M %p %Z | 04:05 AM PST | |

%I:%M:%S %p %Z | 04:05:06 PM PST |

For more information on format codes, see the Python documentation.

Supported Time Zone Abbreviations

| Time Zone | UTC Offset | Description |

|---|---|---|

| NZDT | +13:00 | New Zealand Daylight Time |

| IDLE | +12:00 | International Date Line, East |

| NZST | +12:00 | New Zealand Standard Time |

| NZT | +12:00 | New Zealand Time |

| AESST | +11:00 | Australia Eastern Summer Standard Time |

| ACSST | +10:30 | Central Australia Summer Standard Time |

| CADT | +10:30 | Central Australia Daylight Savings Time |

| SADT | +10:30 | South Australian Daylight Time |

| AEST | +10:00 | Australia Eastern Standard Time |

| EAST | +10:00 | East Australian Standard Time |

| GST | +10:00 | Guam Standard Time |

| LIGT | +10:00 | Melbourne, Australia |

| SAST | +09:30 | South Australia Standard Time |

| CAST | +09:30 | Central Australia Standard Time |

| AWSST | +09:00 | Australia Western Summer Standard Time |

| JST | +09:00 | Japan Standard Time |

| KST | +09:00 | Korea Standard Time |

| MHT | +09:00 | Kwajalein Time |

| WDT | +09:00 | West Australian Daylight Time |

| MT | +08:30 | Moluccas Time |

| AWST | +08:00 | Australia Western Standard Time |

| CCT | +08:00 | China Coastal Time |

| WADT | +08:00 | West Australian Daylight Time |

| WST | +08:00 | West Australian Standard Time |

| JT | +07:30 | Java Time |

| ALMST | +07:00 | Almaty Summer Time |

| WAST | +07:00 | West Australian Standard Time |

| CXT | +07:00 | Christmas (Island) Time |

| ALMT | +06:00 | Almaty Time |

| MAWT | +06:00 | Mawson (Antarctica) Time |

| IOT | +05:00 | Indian Chagos Time |

| MVT | +05:00 | Maldives Island Time |

| TFT | +05:00 | Kerguelen Time |

| AFT | +04:30 | Afganistan Time |

| EAST | +04:00 | Antananarivo Savings Time |

| MUT | +04:00 | Mauritius Island Time |

| RET | +04:00 | Reunion Island Time |

| SCT | +04:00 | Mahe Island Time |

| IT | +03:30 | Iran Time |

| EAT | +03:00 | Antananarivo, Comoro Time |

| BT | +03:00 | Baghdad Time |

| EETDST | +03:00 | Eastern Europe Daylight Savings Time |

| HMT | +03:00 | Hellas Mediterranean Time (?) |

| BDST | +02:00 | British Double Standard Time |

| CEST | +02:00 | Central European Savings Time |

| CETDST | +02:00 | Central European Daylight Savings Time |

| EET | +02:00 | Eastern Europe, USSR Zone 1 |

| FWT | +02:00 | French Winter Time |

| IST | +02:00 | Israel Standard Time |

| MEST | +02:00 | Middle Europe Summer Time |

| METDST | +02:00 | Middle Europe Daylight Time |

| SST | +02:00 | Swedish Summer Time |

| BST | +01:00 | British Summer Time |

| CET | +01:00 | Central European Time |

| DNT | +01:00 | Dansk Normal Tid |

| FST | +01:00 | French Summer Time |

| MET | +01:00 | Middle Europe Time |

| MEWT | +01:00 | Middle Europe Winter Time |

| MEZ | +01:00 | Middle Europe Zone |

| NOR | +01:00 | Norway Standard Time |

| SET | +01:00 | Seychelles Time |

| SWT | +01:00 | Swedish Winter Time |

| WETDST | +01:00 | Western Europe Daylight Savings Time |

| GMT | +00:00 | Greenwich Mean Time |

| UT | +00:00 | Universal Time |

| UTC | +00:00 | Universal Time, Coordinated |

| Z | +00:00 | Same as UTC |

| ZULU | +00:00 | Same as UTC |

| WET | +00:00 | Western Europe |

| WAT | -01:00 | West Africa Time |

| NDT | -02:30 | Newfoundland Daylight Time |

| ADT | -03:00 | Atlantic Daylight Time |

| AWT | -03:00 | (unknown) |

| NFT | -03:30 | Newfoundland Standard Time |

| NST | -03:30 | Newfoundland Standard Time |

| AST | -04:00 | Atlantic Standard Time (Canada) |

| ACST | -04:00 | Atlantic/Porto Acre Summer Time |

| ACT | -05:00 | Atlantic/Porto Acre Standard Time |

| EDT | -04:00 | Eastern Daylight Time |

| CDT | -05:00 | Central Daylight Time |

| EST | -05:00 | Eastern Standard Time |

| CST | -06:00 | Central Standard Time |

| MDT | -06:00 | Mountain Daylight Time |

| MST | -07:00 | Mountain Standard Time |

| PDT | -07:00 | Pacific Daylight Time |

| AKDT | -08:00 | Alaska Daylight Time |

| PST | -08:00 | Pacific Standard Time |

| YDT | -08:00 | Yukon Daylight Time |

| AKST | -09:00 | Alaska Standard Time |

| HDT | -09:00 | Hawaii/Alaska Daylight Time |

| YST | -09:00 | Yukon Standard Time |

| AHST | -10:00 | Alaska-Hawaii Standard Time |

| HST | -10:00 | Hawaii Standard Time |

| CAT | -10:00 | Central Alaska Time |

| NT | -11:00 | Nome Time |

| IDLW | -12:00 | International Date Line, West |

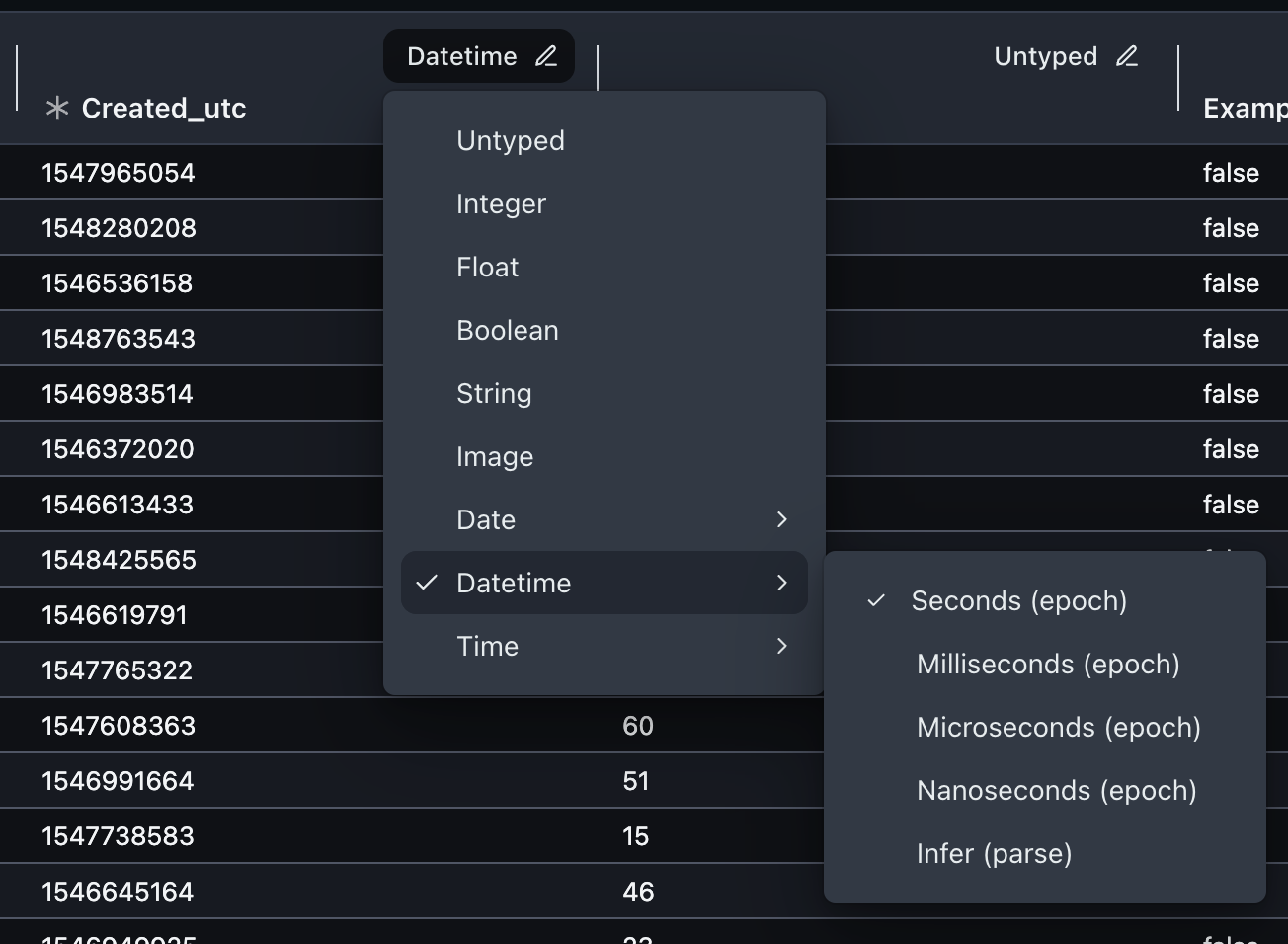

Datetime

Columns with type Datetime are interpreted as datetimes. The column sub-types specify how the data is converted to a datetime.

For example, datetime_epoch_ms specifies that the column contains Unix timestamps in milliseconds.

datetime_parse specifies that the column contains datetimes in a custom format. Some examples of supported formats are listed below (we support any combination of the date and time formats):

| Format | Example |

|---|---|

%Y-%m-%dT%H:%M:%S.%f | 1999-02-15T04:05:06.789 |

%Y/%m/%d %H:%M | 1999/02/15 04:05 |

%B %d, %Y %I:%M %p %Z | February 15, 1999 04:05 AM PST |

%Y%m%dT%H%M%S | 19990215T040506 |

For more information on format codes, see the Python documentation.

Schema Updates

There are two ways to update the schema for your dataset:

- Using the Studio UI after uploading your dataset.

- Providing a schema override when uploading your dataset (via Python Client). You can provide partial schema overrides by specifying column types for a subset of columns. You do not need to provide overrides for all columns in your dataset.

[

{

"name": "<name of column to override>",

"column_type": "<column type to update to>"

}

]